Hi!

Recently, I did the course “advanced machine learning” in university. While the curriculum was mathematically rigorous, the concepts have been very cool – from the basic backpropagation algorithm to LoRA adaptations of large language models. Since the backpropagation algorithm is the basis of AI and for most optimizations and extending concepts, I programmed it some time ago from scratch in Python and the numpy library. Now I want to continue – and I want to know: How difficult is it to install a trained model on a microcontroller? And what are the limitations of AI on such a limited hardware? Let’s find out!

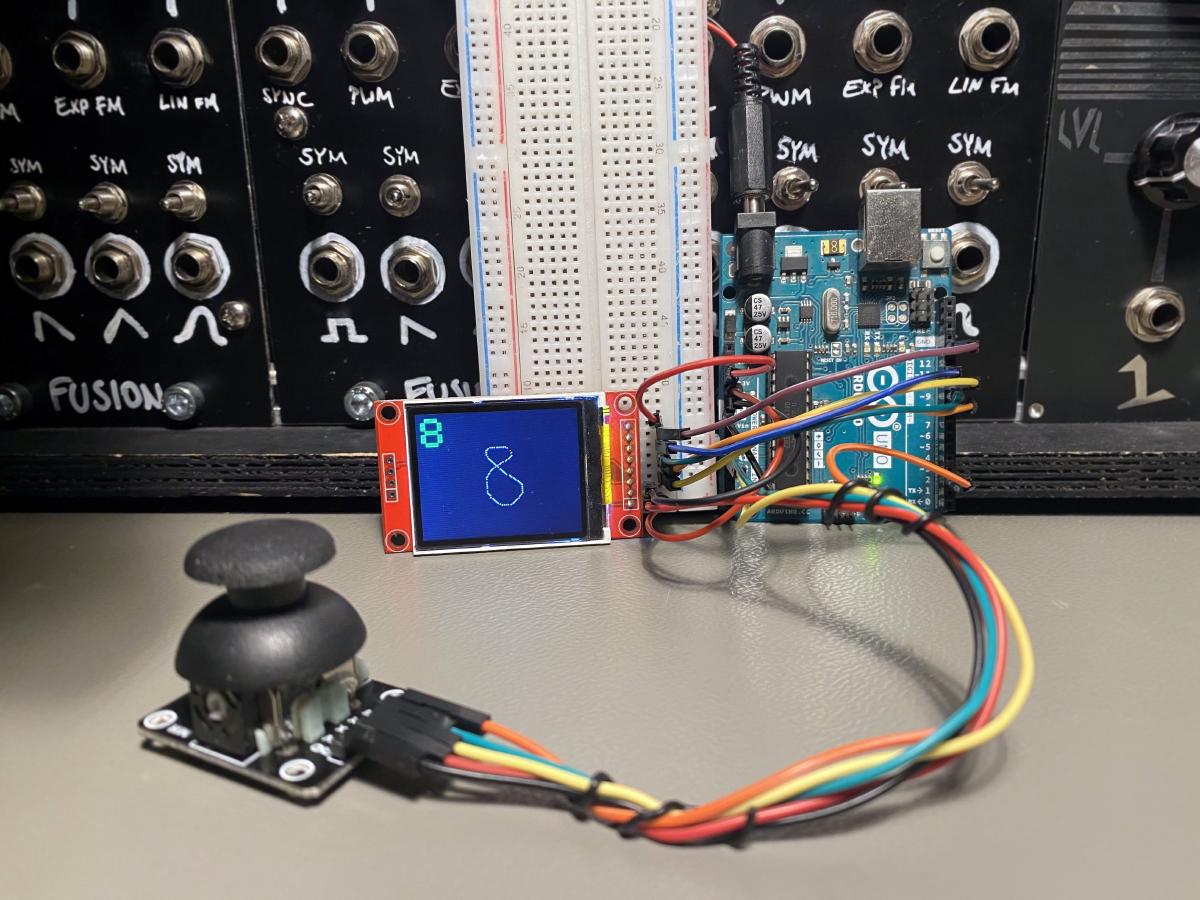

Demo #

This is what I’ve built!

You see an Arduino Uno, a microcontroller with very limited computing power, connected to a small screen and a joystick. With the joystick, I can “draw” digits, which then get automatically recognized with the AI model on the Arduino, to be seen in the number that pops up in the top left corner of the screen. You see, it’s sometimes wrong! In the article, I’ll dig into the dataset to find out why.

Essentials/TLDR for advanced readers #

Essentially, I transformed the MNIST handwritten digit dataset. Instead of training on 28x28 pixel greyscale images, I use a point-cloud of 25 points for each image. It’s like the children’s game connect the dots – I don’t store the whole image, just the coordinates. I normalize the bounding box of the dots and sort them by their y and then x coordinates. While drawing the digit on the screen with the joystick, I collect points sequentially, ensuring a minimum distance of 2 pixels between each dot, until the buffer of 25 dots are filled.

With 25 dots, x and y coordinate each, I have 50 input neurons instead of the standard 784. With only one hidden layer of 40 neurons and 10 output neurons, the total parameter count is 2,450. Training with momentum-based stochastic gradient descent achieves an accuracy of around 75%. During training, I use the ReLU and softmax activation function for the hidden and output layers. On the Arduino itself, to save computation, I skip the SoftMax calculation in the output layer and just use the neuron index with the maximum value as prediction.

In terms of storage: Even though the weights/biases are stored as 4-byte floats, they use only 9.8kByte of the Arduino Uno’s 32kByte flash memory (about 30%). During prediction, roughly 440 Bytes in values are stored dynamically, utilizing about 20% of the 2 kByte SRAM. With matrix-multiplications happening component-wise and sequentially directly on the CPU of the Arduino, the biggest impact on SRAM-usage is storing the neural network values, which takes 400 Bytes. Overall, data preprocessing and prediction on the Arduino Uno takes only ca. 70ms.